Multi-agent orchestration solves a real problem — one model holding the entire arc of a development cycle in its head is a ceiling, not a feature — but decomposing work across specialized agents does not by itself guarantee quality. It guarantees speed. If the agents downstream operate against stale assumptions, hallucinated infrastructure state, or story requirements that no one verified against what is actually deployed, the conveyor belt produces wrong outputs efficiently. The failure mode is not slower than a single model doing everything. It is faster, more confident, and harder to diagnose. Orchestration alone does not eliminate this risk. It relocates it.

The prior post in this series on the agent harness made the structural case for why production-grade AI requires deterministic scaffolding. The post on agentic boundaries examined what the harness enforces at the perimeter. The post on the dev-workflow conveyor belt mapped all six stations and explained the handoff structure that keeps each agent's context window tight. This post zooms in on what that conveyor belt post called Station One — the technical-analyst's context-engineering work in Phases 0.5a and 0.5b — and argues that this station is not a preparation step. It is the load-bearing foundation. Skip it and the rest of the belt is fast assembly producing slop. Run it correctly and every downstream agent operates against verified reality, with a shared specification that makes their independent judgments meaningful.

The Problem That Orchestration Does Not Solve

When a sprint-programmer agent is invoked to implement a story, it makes dozens of implicit assumptions: about the framework version the project runs, about the database schema it is writing against, about the CDK stack that governs deployment constraints, about whether the API endpoint the story references actually exists in the current codebase. If those assumptions are wrong — because the story was written against a planned state rather than a deployed one, because a dependency was upgraded two sprints ago and the story doc was not updated, because a migration changed the schema after the story was authored — the programmer will implement confidently against a false picture. The code-reviewer downstream will then evaluate that implementation against the same false picture, because the programmer and reviewer are drawing from the same unchecked source: the story document. Their judgments are not independent. They are correlated by a shared error.

This is not a problem that better prompting solves. It is not a problem that a larger context window solves. It is a problem that requires a verification step — a moment, before any coding begins, where an agent whose only job is reading and comparing goes and checks whether what the story says is true against what the project actually is. That is the role of the technical-analyst in the dev-workflow harness. Phase 0.5a produces sprint-wide ground truth. Phase 0.5b produces per-story ground truth. Together they form the grounded foundation that makes the rest of the belt trustworthy rather than merely fast.

Phase 0.5a: Building Sprint-Wide Ground Truth

The first phase runs once per sprint, not once per story. Its output — a document called sprint-context.md, stored in project-scoped warm memory — captures a verified snapshot of the project's infrastructure, technology stack, and source-of-truth locations as they actually exist at sprint start. Every agent that runs during the sprint reads against this document. It is the difference between agents that are reasoning from their training data's priors about what a Next.js project looks like, and agents that are reasoning from a checked description of this specific project at this specific moment.

Building this document is a deliberately bounded task. The technical-analyst reads the GitHub deployment workflows — the dev, staging, and production workflow files — and extracts the declared infrastructure paths, AWS region and account references, environment-specific configuration names, and CDK stack output keys. It then validates that each declared path actually has a corresponding directory in the project root. Any declared path whose directory does not exist becomes a Known Divergence — not an error the analyst silently corrects, but a documented mismatch surfaced to the operator. From there the analyst reads the CDK infrastructure files, extracting which AWS services are provisioned, what the environment naming pattern is, and what the table and function names are, since those names appear as implementation constraints in story work. It checks the database migration directory, recording the total migration count and the filename of the highest-numbered migration — the current schema tip. The resulting document captures a Technology Fingerprint (project type, frontend framework and version, backend runtime, database layer, CDK stack inventory), Source of Truth Locations (the canonical paths for workflows, frontend entry, backend entry, migrations, CDK stacks), Active Constraints derived from the infrastructure surface, and Known Divergences from the scaffold validation pass.

The engineering discipline here is not in the reading. It is in the guardrails around what this document is and how it is maintained.

The file carries a machine-readable stale-read guard on its second line: Valid for: Sprint {N}. Every downstream consumer — the technical-analyst running Phase 0.5b, the story-from-epic skill authoring new stories, the code-reviewer receiving the Story Context Block — checks this line before trusting any content. If the sprint number does not match the current sprint, the reader treats the file as historical, not ground truth. This is not a convention. It is a schema enforced by the consumers. A document that says Valid for: Sprint 12 cannot accidentally become the ground truth for Sprint 13 work, no matter how similar the two sprints look.

When a sprint-context.md file already exists from a prior sprint and dev-workflow encounters it, the harness does not silently overwrite it. The /story-init skill — which owns the canonical build procedure for this document — surfaces an explicit impact statement to the operator: how many open stories exist for the prior sprint, what replacing the file will affect, and whether to archive the prior sprint's context to cold memory before overwriting. The archive path matters when the prior sprint produced BLOCK or ANNOTATE findings during story authoring, or when Known Divergences were recorded — those entries have forensic value at retrospectives and post-mortems. Archive-then-overwrite preserves them. The operator selects; nothing happens silently.

When the file is current — sprint number matches, file was built or verified earlier in the same sprint — the analyst does not rebuild it. It runs a Verification Gate: comparing the file's Technology Fingerprint against the current package.json, confirming that each Source of Truth Location still exists in the project, checking whether Active Constraints remain accurate, and reviewing the Known Divergences section for items that have been resolved or newly appeared. If no drift is found across all four checks, the analyst appends a verification stamp — Verified: {date} by technical-analyst via dev-workflow Phase 0.5a — and proceeds. If drift is found, the analyst does not modify the file. It surfaces the delta to the operator as a structured table: section, what the file says, what current state is, and the source that established it. The operator selects whether to approve specific corrections, approve all corrections, or abort and investigate manually. The file is only updated if the operator approves. This is the engineering discipline of context: trust is earned through verification, not assumed from the file's existence.

The branch-drift check adds a further layer. The sprint-context.md file records the sprint branch name in a Branch Context section. Every invocation of Phase 0.5a — including mid-sprint invocations when the analyst is running Phase 0.5b for a new story — compares that recorded branch name against git branch --show-current. If they disagree, the workflow halts before any temporal drift checks run, surfacing the mismatch and asking the operator to choose: switch to the recorded sprint branch, update sprint-context.md to reflect a genuine branch change, or abort. An agent that silently switches branches is a class of failure that produces untraceable damage. The check is cheap — one git command — and runs every time.

The /story-init Skill and Why the Build Procedure Cannot Diverge

The relationship between /story-init and Phase 0.5a reveals something important about how well-composed agentic systems are structured. /story-init is a skill — an invokable workflow that the orchestrator can call at sprint planning time, before any story authoring begins. Phase 0.5a is a phase inside dev-workflow — the first substantive work that happens when a story enters the belt. Both need to produce sprint-context.md. Both need that file to be in exactly the same format for downstream consumers to trust it regardless of who built it.

The solution is that both delegate to a single Build Procedure — defined once in /story-init, referenced by Phase 0.5a when the file is absent or stale. There is no divergence permitted between what /story-init produces and what Phase 0.5a would have produced. The format, the header schema, the machine-readable stale-read guard, the section structure — all of it is owned by one canonical specification. This is the coupling that makes the system coherent: /story-init is a skill, /dev-workflow is a skill, technical-analyst is an agent, and they reference each other through defined contracts. The whole produces trustworthy output only when the composition is correct. Either tool generating a slightly different format would break the consumers that depend on the stale-read guard, the Branch Context section's machine-readable fields, and the section headings that downstream readers locate by name rather than line number.

When /story-init has already seeded the file before the sprint's first dev-workflow session, Phase 0.5a's Evaluate step returns CURRENT and the Verification Gate runs rather than a rebuild. When the file is absent at the start of a dev-workflow session, Phase 0.5a's Route step invokes the Build Procedure. The two skills share one procedure and never conflict. That is not an accident. It is a design constraint.

Phase 0.5b: Per-Story Ground Truth

Where Phase 0.5a establishes what the project is, Phase 0.5b establishes whether the story's assumptions about the project are correct. It runs once per story, after the sprint-context.md exists and is current. Its output is a Story Context Block — a structured document of 300 to 500 tokens that will be prepended to every downstream agent's prompt for that story.

The analyst runs a structured checklist across eight categories, labeled A through H. Technology stack alignment checks whether the story references technologies that match what is deployed. Database layer checks whether the story's data model assumptions match the actual schema and migration state. API and service contracts checks whether the endpoints the story calls or defines exist as documented. Frontend patterns checks whether framework, component library, and routing constraints match the current frontend entry. Infrastructure and deployment constraints checks whether the story risks violating Content Security Policy (CSP), static export requirements, or CloudFront rules. Dependency and version constraints checks whether referenced libraries are installed at the expected versions. Integration points checks whether external service integrations the story depends on are active. And Category H — always runs, no exceptions — validates the story's explicit infrastructure claims against current state and performs a format check on the acceptance criteria.

Each category produces verdicts: PASS, WARN, or FAIL. Categories that do not apply to the story are omitted from the output entirely — not listed as N/A, simply absent. This keeps the Story Context Block tight. The analyst reads five to twelve files per story, no more. If a category cannot be resolved from the defined sources, the output is WARN with a note that the source was missing or ambiguous, not an expanded search into the codebase. The discipline is to verify quickly and surface uncertainty rather than to explore until certainty is found.

A FAIL verdict — meaning the story's assumption contradicts current infrastructure state, sourced to a specific file — stops the conveyor belt. The sprint-programmer is never invoked. Instead, the orchestrator surfaces an escalation structure to the operator: what the story assumed, what Phase 0.5b found, what the source was. The operator chooses from four paths: update the story and re-run Phase 0.5b; override and implement against current reality with the override noted in the Story Context Block; escalate to the architect because the mismatch indicates a design decision is needed; or pause the sprint if the mismatch affects assumptions shared across other stories. What the operator does not get to do — what the harness does not permit — is have the sprint-programmer silently implement against a known-incorrect assumption. The halt is structural. The decision surfaces to the human. The programmer only starts when the ground truth is clean.

WARN items behave differently. They pass through to the Story Context Block as informational context for the sprint-programmer and code-reviewer, without triggering the escalation gate. The programmer reads them as flags requiring judgment. The reviewer reads them as known uncertainties that must be confirmed addressed. They are not blockers; they are annotated uncertainty.

The Shared Spec That Makes Independent Judgments Meaningful

The deeper architectural insight of Phase 0.5b is what the Story Context Block does to the relationship between the sprint-programmer and the code-reviewer. In the prior post on the conveyor belt, I wrote that two agents with the same Story Context Block as the shared ground truth but different roles and different prompts produce independent judgments — and that the disagreement, when it surfaces, is the signal. That independence deserves elaboration, because it is not automatic.

In a system where the programmer and reviewer are both reasoning from the story document directly, their judgments are correlated: if the story document contains an incorrect assumption, both agents will likely build against it and evaluate against it without flagging it, because neither has a verified external reference to compare against. The error passes through both checks. In a system where both agents receive the Story Context Block — which was built by a third agent whose only job was verification — the ground truth is external to both. If the programmer built something that contradicts a PASS item in the block, the reviewer will catch it and raise it as CRITICAL, because the block defines the infrastructure reality the programmer was supposed to implement against. The reviewer's judgment is independent of the programmer's reasoning because both are measured against a document that neither of them produced.

This is what makes the shared spec structurally meaningful rather than just administratively convenient. The Story Context Block is not a summary the programmer passes to the reviewer. It is a verified external reference that neither agent wrote, produced by an agent whose role is specifically to not code and not review — to read and compare. The downstream independence of the programmer and reviewer follows from the upstream independence of the analyst.

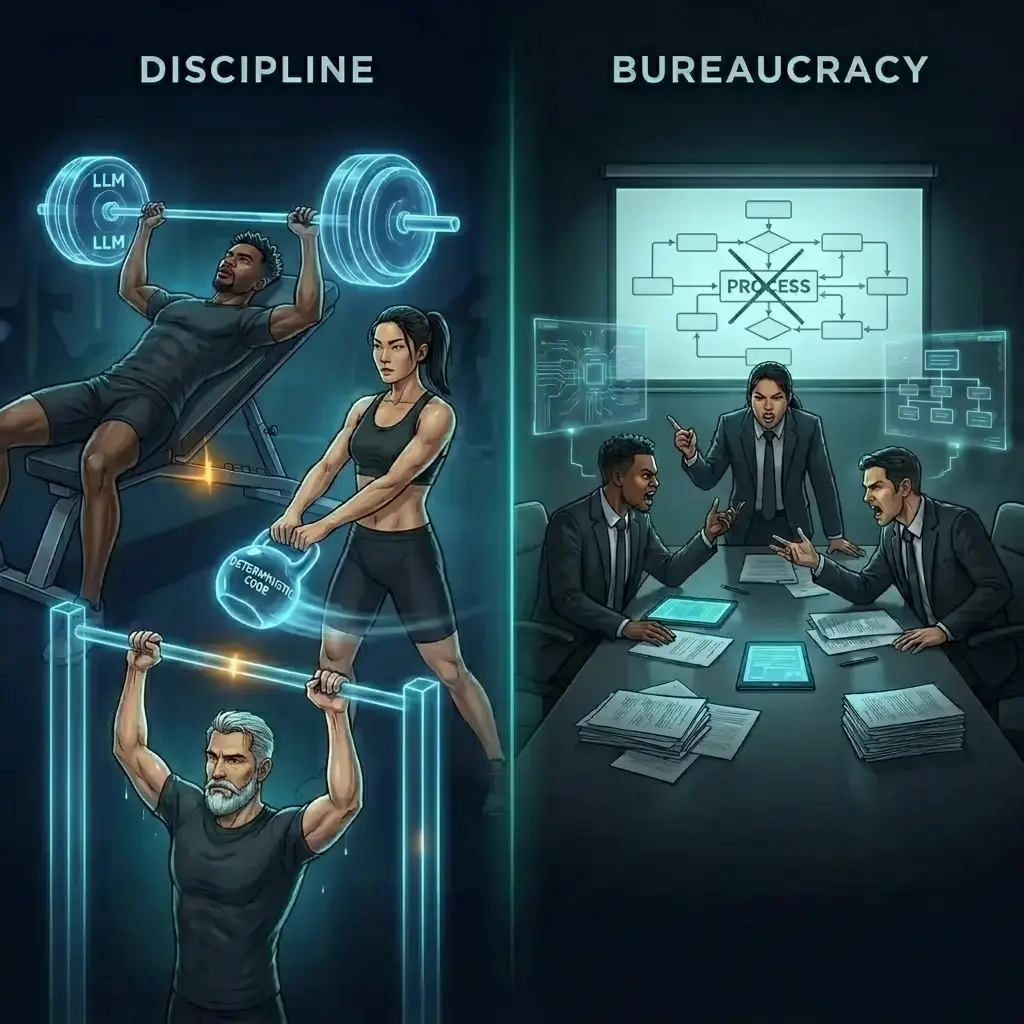

Context Engineering Is the Discipline, Not the Bureaucracy

It is tempting to look at the two phases described here — a sprint-wide build plus stale-read guards plus verification gates plus drift detection plus archive decisions plus a per-story eight-category checklist plus a halt-on-FAIL protocol — and conclude that this is overhead. That the real work is the coding. That all of this ceremony slows the belt down. That experience would let you skip it and catch problems in review instead.

That conclusion gets the causality backwards. The coding is fast precisely because the context-engineering station ran first. The sprint-programmer is not doing discovery — the analyst already did it, and the programmer is reading a 400-token summary of what was found. The code-reviewer is not arguing with the programmer about what the project actually uses — the Story Context Block settled that before the programmer wrote the first line. The reviewer is evaluating implementation decisions, not infrastructure facts, because the infrastructure facts were established upstream. The quality gate works because the basis for evaluation is shared and verified, not because the reviewer is smarter or has a bigger context window.

The stale-read guard, the verification stamp, the archive-then-overwrite choice, the branch-drift check — these are the engineering disciplines of context. They are what prevent the ground-truth document from becoming stale institutional knowledge that agents trust past its shelf life. They are what distinguish a production-grade context layer from a notes file someone wrote at sprint planning and forgot to update. The analogy to code is exact: context without version control and verification decays. Context with those properties stays trustworthy.

What separates production-grade agentic systems from clever prototypes is not usually the orchestration pattern or the agent count or the model choice. It is the discipline applied to the information each agent reasons from. An agent that reasons from verified ground truth produces output that can be evaluated against that same truth. An agent that reasons from unverified assumption produces output that can only be evaluated against other assumptions — and the errors compound downstream in ways that become very expensive to trace. Station One of the conveyor belt is the investment in verified ground truth. Everything else the belt produces is only as trustworthy as that investment is sound.